Transformers

Overview

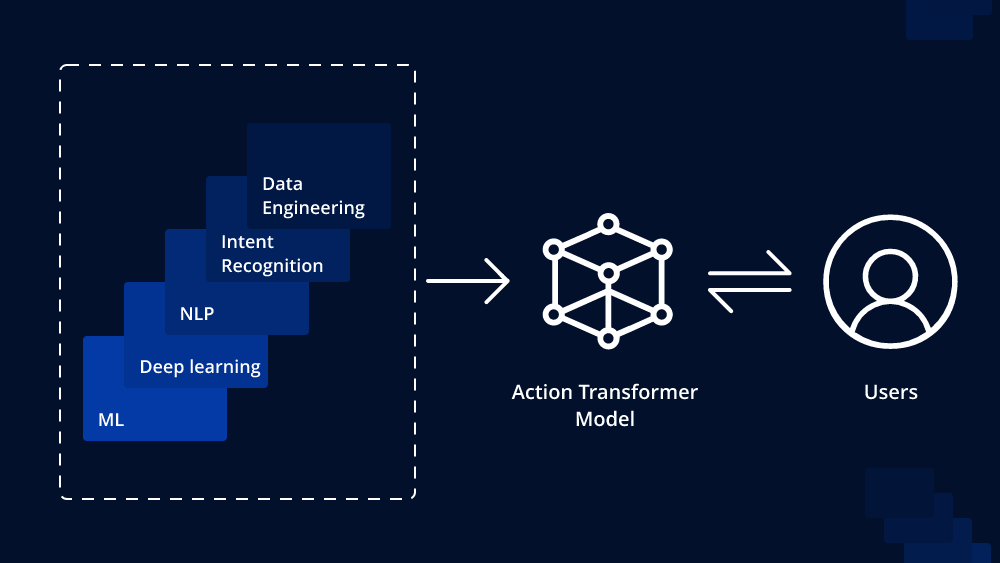

Transformers are a type of deep learning model primarily used in NLP and increasingly in computer vision. They excel at capturing long-range dependencies in sequences.

Definition

A Transformer is a neural network architecture designed to handle sequential data, using self-attention mechanisms to process input in parallel.

Types / Variants

- Encoder-only (e.g., BERT)

- Decoder-only (e.g., GPT)

- Encoder-Decoder (e.g., T5)

Key Concepts

- Self-Attention

- Multi-Head Attention

- Positional Encoding

- Feedforward Layers

- Layer Normalization

Tutorials

- Getting Started with Transformers: Your First 10 Minutes

• Build your first Transformer model in Python using Hugging Face’s pipeline—tokenize, infer, and explore outputs with minimal code.

- Implementing Transformer from Scratch

• Hands-on guide to coding positional embeddings, multi-head attention, encoder/decoder layers, and training loop in Python.

- Introduction to Transformers – PyLessons

• Learn how to implement embedding layers, self-attention, and build a basic Transformer model in TensorFlow step by step.

Videos

• Live coding demo: load a pretrained model, tokenize text, and run inference in under 40 lines with Hugging Face.

• Create a sentiment analysis classifier with NLTK VADER and Huggingface Roberta Transformers to classify Amazon reviews.

• Step by step explanation and illustrations of how Transformer neural networks work.

Applications

- Text classification (e.g., sentiment analysis)

- Machine translation

- Question answering

- Summarization

- Image generation (Vision Transformers)

Resources

Tips & Best Practices

- Start with pretrained models to save training time

- Understand positional encodings for sequence data

- Experiment with attention visualization to interpret models