Random Forests

Overview

Learn the fundamentals of Random Forests with step-by-step tutorials, video guides, and practical applications.

Definition

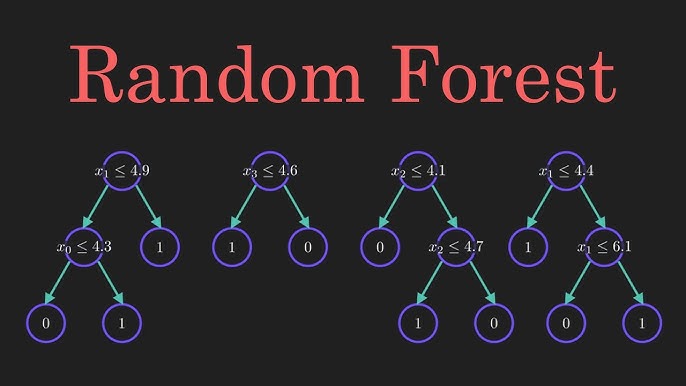

Random Forest is an ensemble learning method that constructs multiple decision trees during training and outputs the mode of classes (classification) or mean prediction (regression) of the individual trees.

Types / Variants

- Random Forest Classifier: For classification tasks using majority vote from decision trees.

- Random Forest Regressor: For regression tasks using the average of tree predictions.

Key Concepts

- Decision Trees: Base learners in a Random Forest.

- Bagging (Bootstrap Aggregating): Random subsets of data used to train each tree independently.

- Feature Randomness: Each tree considers a random subset of features when splitting nodes to reduce correlation.

- Out-of-Bag (OOB) Error: Estimate of model performance using data not included in the bootstrap sample.

- Feature Importance: Measure the contribution of each feature to predictions.

Tutorials

- A Gentle Introduction to Random Forest in Python

• Implement Random Forest for classification and regression using scikit-learn, with clear code examples and explanations.

- Random Forest Classification with scikit-learn

• Hands-on guide: load your data, train RandomForestClassifier, evaluate performance and tune hyperparameters.

- Step-by-Step Guide to Random Forest in sklearn

• Walk through data prep, model building, feature importance, and hyperparameter tuning in Python.

Videos

• Live coding: import data, train a RandomForestClassifier, make predictions and evaluate accuracy.

• Step-by-step project structure: set up preprocessing, model training and deployment pipeline with Python.

• Hands-on session: implement Random Forest on a real dataset, tune parameters and visualize results in Python.

Applications

- Classification tasks like credit scoring, spam detection, and disease diagnosis.

- Regression tasks such as predicting house prices or stock prices.

- Feature selection and ranking important variables for interpretability.

- Ensemble modeling to improve performance and reduce overfitting.

Resources

Tips & Best Practices

- Use a sufficient number of trees (n_estimators) for stable predictions.

- Tune max_depth and min_samples_split to prevent overfitting.

- Random Forests handle missing data and outliers better than single decision trees.

- Use feature importance scores to reduce dimensionality and improve model efficiency.