Logistic Regression

Overview

Learn the fundamentals of Logistic Regression with step-by-step tutorials, video guides, and practical applications.

Definition

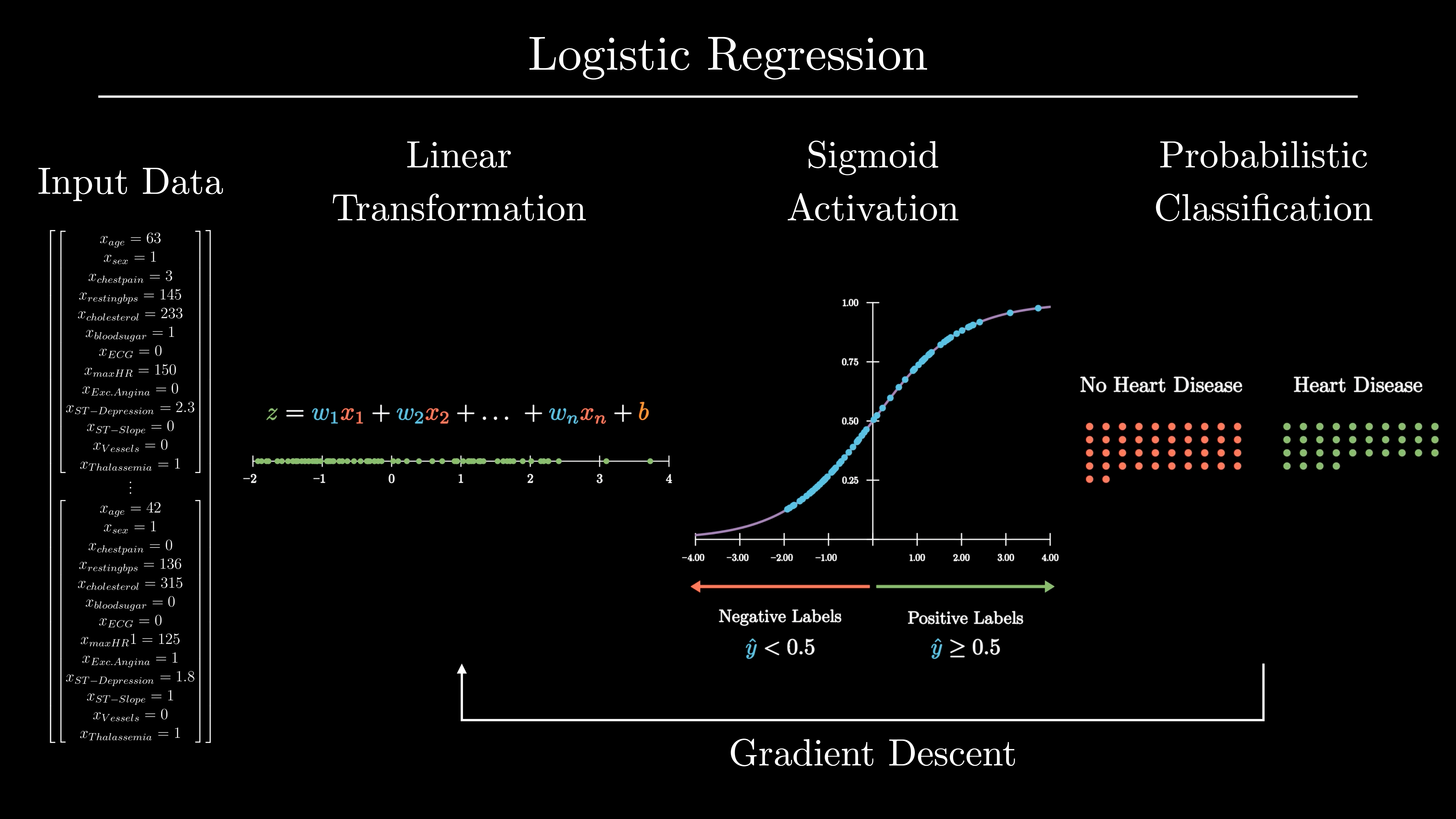

Logistic Regression is a supervised learning algorithm used for classification tasks. It models the probability that a given input belongs to a particular class using the logistic (sigmoid) function.

Types / Variants

- Binary Logistic Regression: Predicts one of two possible outcomes.

- Multinomial Logistic Regression: Predicts outcomes with more than two categories.

Key Concepts

- Sigmoid Function: Maps any real-valued number into the range [0,1] for probability interpretation.

- Log-Odds: Linear combination of input features transformed into probabilities via the sigmoid function.

- Decision Boundary: Threshold (commonly 0.5) used to classify outcomes.

- Loss Function: Uses cross-entropy (log loss) to evaluate model performance.

- Assumptions: Linear relationship between features and log-odds, independent errors, no multicollinearity.

Tutorials

- Logistic Regression Tutorial for Machine Learning

• Learn how to build, train, and evaluate a logistic regression model from scratch in Python using gradient descent.

- Step-by-Step Guide to Logistic Regression in Python

• Walk through loading the Breast Cancer dataset, fitting a LogisticRegression, and interpreting output with scikit-learn.

- Logistic Regression in Python – Real Python

• Hands-on coding: prepare data, train your model, tune hyperparameters, and plot decision boundaries.

Videos

• Pranit Pawar walks you through implementing logistic regression from scratch in Python, epoch by epoch.

• Code a logistic model on real data: fit, predict and evaluate using scikit-learn’s API.

• Keith Galli demonstrates how to load Iris data, train a KNeighborsClassifier, and plot decision boundaries.

Applications

- Medical diagnosis (e.g., predicting disease presence/absence).

- Credit scoring (e.g., approve or reject loan applications).

- Marketing campaigns (e.g., predict customer response: yes/no).

- Binary image classification tasks.

Resources

Tips & Best Practices

- Always check for multicollinearity among input features.

- Feature scaling is not strictly necessary but can help convergence in gradient descent.

- Use regularization (L1 or L2) to prevent overfitting in high-dimensional datasets.

- Interpret model coefficients in terms of log-odds or odds ratios.